Introduction We are an experimental quantum optics group run by Kevin Resch, based in the Department of Physics & Astronomy and the Institute for Quantum Computing at the University of Waterloo.

|

We have a new paper out in Physical Review A called Enhanced-resolution chirped-pulse interferometry by Maggie Bérubé, Mike Mazurek (NIST), and Kevin Resch.

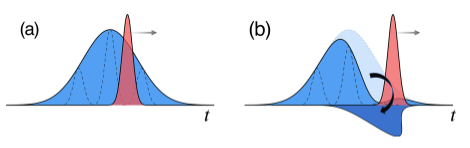

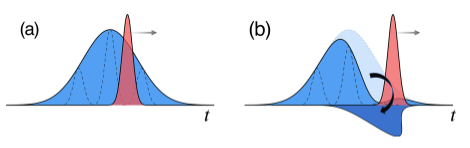

Abstract: Chirped-pulse interferometry (CPI) is a classical low-coherence interferometry technique with automatic dispersion cancellation and improved resolution over white-light interference. Previous work showed that CPI with linearly chirped Gaussian laser pulses achieves a Sqrt(2) improvement in resolution over conventional white-light interferometry, but this is less than the factor of 2 improvement exhibited by a comparable quantum technique. In this work, we show how a particular class of nonlinearly chirped laser pulses can meet, and even exceed, the factor of 2 improvement in resolution. This enhanced-resolution CPI removes the resolution advantage of quantum interferometers in dispersion-cancelled interferometry.

*SuperErf refers to a specific class of nonlinearly chirped laser pulses based on antiderivatives of the error function.

Our paper “Ultrafast measurement of energy-time entanglement with an optical Kerr shutter” by Andrew Cameron, Kate Fenwick, Sandra L. Cheng, Sacha Schwarz, Benjamin MacLellan, Philip Bustard, Duncan England, Benjamin Sussman, and Kevin Resch was published in Physical Review A. This work is a collaborative effort between the University of Waterloo and the National Research Council of Canada.

Abstract: The energy-time degree of freedom has emerged as a promising avenue for photonic quantum technologies due to its intrinsic robustness against decoherence; however, the timescales associated with energy-time entangled photons make for difficult measurement and manipulation. We implement two optical Kerr gates, in short (35 mm) pieces of single-mode fiber, to achieve ultrafast measurement of the time correlations of energy-time entangled photon pairs, with resolutions of 320 ± 30 fs and 290 ± 30 fs for signal and idler photons, respectively. These measurements, in addition to joint spectral measurements of the photon-pair state, are used to verify entanglement by means of the violation of a time-bandwidth inequality. Without the need for an interferometric setup or frequency conversion, the optical Kerr shutter is a valuable addition to the ultrafast quantum optics control and metrology toolbox.

We have some new preprints out on the arxiv which stem from our collaboration with Rob Spekkens at the Perimeter Institute. The first is related to a new way of operationally determining the ‘Generalized Probability Theory’ that explains experimental results; we applied this method to optical 3-level systems, which quantum mechanics would describe as qutrits. The second work is related to applying different causal accounts of a Bell experiment and using model selection techniques to determine the most likely explanation for the results.

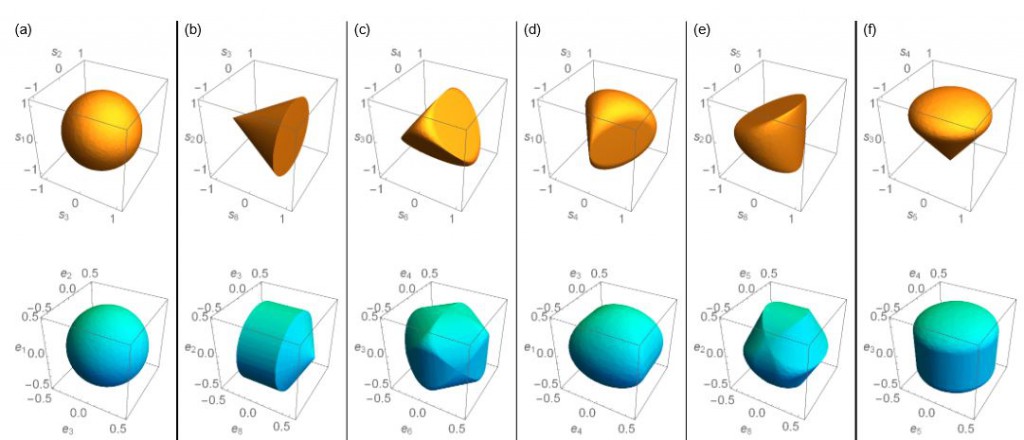

Experimentally bounding deviations from quantum theory for a photonic three-level system using theory-agnostic tomography

Michael Grabowecky, Christopher Pollack, Andrew Cameron, Robert Spekkens, Kevin Resch

Abstract: If one seeks to test quantum theory against many alternatives in a landscape of possible physical theories, then it is crucial to be able to analyze experimental data in a theory-agnostic way. This can be achieved using the framework of Generalized Probabilistic Theories (GPTs). Here, we implement GPT tomography on a three-level system corresponding to a single photon shared among three modes. This scheme achieves a GPT characterization of each of the preparations and measurements implemented in the experiment without requiring any prior characterization of either. Assuming that the sets of realized preparations and measurements are tomographically complete, our analysis identifies the most likely dimension of the GPT vector space describing the three-level system to be nine, in agreement with the value predicted by quantum theory. Relative to this dimension, we infer the scope of GPTs that are consistent with our experimental data by identifying polytopes that provide inner and outer bounds for the state and effect spaces of the true GPT. From these, we are able to determine quantitative bounds on possible deviations from quantum theory. In particular, we bound the degree to which the no-restriction hypothesis might be violated for our three-level system.

https://arxiv.org/abs/2108.05864

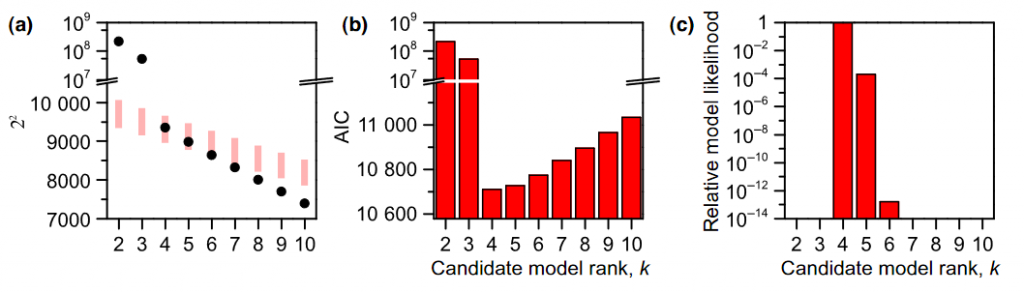

Experimentally adjudicating between different causal accounts of Bell inequality violations via statistical model selection

Patrick J. Daley, Kevin J. Resch, Robert W. Spekkens

Abstract: Bell inequalities follow from a set of seemingly natural assumptions about how to provide a causal model of a Bell experiment. In the face of their violation, two types of causal models that modify some of these assumptions have been proposed: (i) those that are parametrically conservative and structurally radical, such as models where the parameters are conditional probability distributions (termed ‘classical causal models’) but where one posits inter-lab causal influences or superdeterminism, and (ii) those that are parametrically radical and structurally conservative, such as models where the labs are taken to be connected only by a common cause but where conditional probabilities are replaced by conditional density operators (these are termed ‘quantum causal models’). We here seek to adjudicate between these alternatives based on their predictive power. The data from a Bell experiment is divided into a training set and a test set, and for each causal model, the parameters that yield the best fit for the training set are estimated and then used to make predictions about the test set. Our main result is that the structurally radical classical causal models are disfavoured relative to the structurally conservative quantum causal model. Their lower predictive power seems to be due to the fact that, unlike the quantum causal model, they are prone to a certain type of overfitting wherein statistical fluctuations away from the no-signalling condition are mistaken for real features. Our technique shows that it is possible to witness quantumness even in a Bell experiment that does not close the locality loophole. It also overturns the notion that it is impossible to experimentally test the plausibility of superdeterminist models of Bell inequality violations.

https://arxiv.org/abs/2108.00053

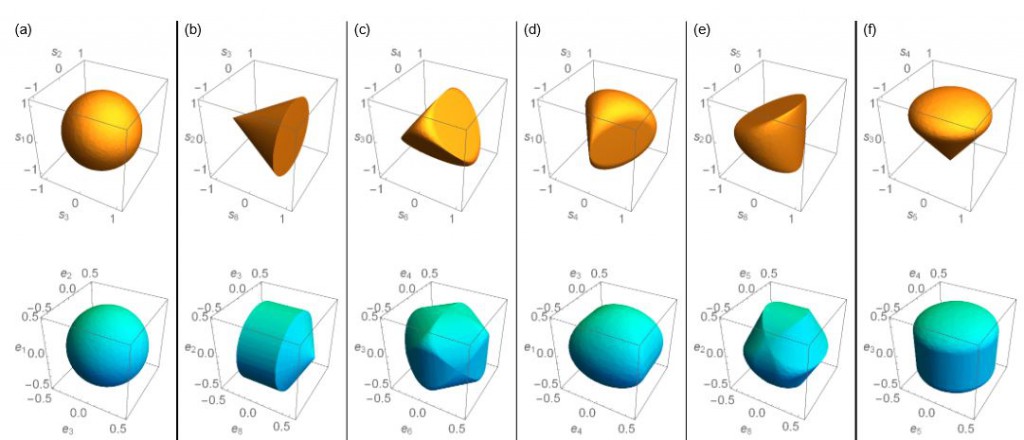

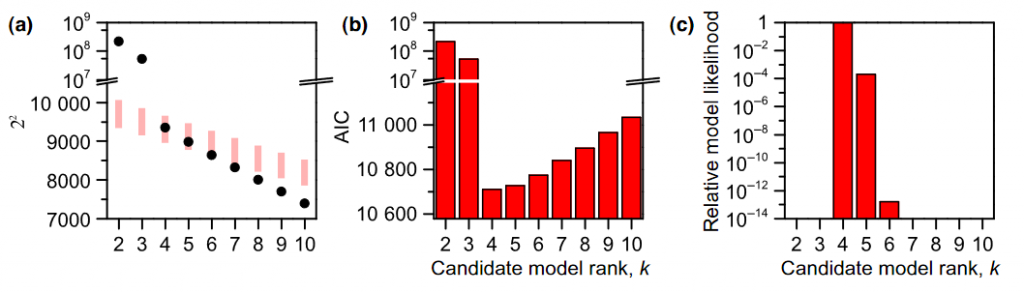

Our new paper Experimentally Bounding Deviations From Quantum Theory in the Landscape of Generalized Probabilistic Theories by Mike Mazurek, Matt Pusey, Kevin Resch, and Rob Spekkens was published in the journal PRX Quantum. This work is a collaboration with our colleagues from the Perimeter Institute for Theoretical Physics.

Abstract: Many experiments in the field of quantum foundations seek to adjudicate between quantum theory and speculative alternatives to it. This requires one to analyze the experimental data in a manner that does not presume the correctness of the quantum formalism. The mathematical framework of generalized probabilistic theories (GPTs) provides a means of doing so. We present a scheme for determining which GPTs are consistent with a given set of experimental data. It proceeds by performing tomography on the preparations and measurements in a self-consistent manner, i.e., without presuming a prior characterization of either. We illustrate the scheme by analyzing experimental data for a large set of preparations and measurements on the polarization degree of freedom of a single photon. We first test various hypotheses for the dimension of the GPT vector space for this degree of freedom. Our analysis identifies the most plausible hypothesis to be dimension 4, which is the value predicted by quantum theory. Under this hypothesis, we can draw the following additional conclusions from our scheme: (i) that the smallest and largest GPT state spaces that could describe photon polarization are a pair of polytopes, each approximating the shape of the Bloch sphere and having a volume ratio of 0.977±0.001, which provides a quantitative bound on the scope for deviations from the state and effect spaces predicted by quantum theory, and (ii) that the maximal violation of the Clauser, Horne, Shimony, and Holt inequality can be at most 1.3%±0.1 greater than the maximum violation allowed by quantum theory, and the maximal violation of a particular inequality for universal noncontextuality can not differ from the quantum prediction by more than this factor on either side. The only possibility for a greater deviation from the quantum state and effect spaces or for greater degrees of supraquantum nonlocality or contextuality, according to our analysis, is if a future experiment (perhaps following the scheme developed here) discovers that additional dimensions of GPT vector space are required to describe photon polarization, in excess of the four dimensions predicted by quantum theory to be adequate to the task.

(In the plot on the left the Y axis label should be chi^2) (In the plot on the left the Y axis label should be chi^2)

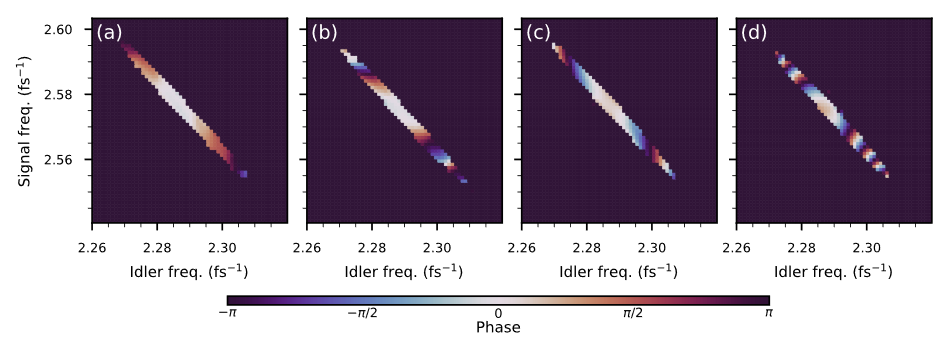

Our paper, Reconstructing ultrafast energy-time-entangled two-photon pulses, by JP, Sacha, and Kevin was published in PRA.

Abstract: The generation of ultrafast laser pulses and the reconstruction of their electric fields is essential for many applications in modern optics. Quantum optical fields can also be generated on ultrafast timescales; however,the tools and methods available for strong laser pulses are not appropriate for measuring the properties of weak, possibly entangled pulses. Here, we demonstrate a method to reconstruct the joint-spectral amplitude of a two-photon energy-time entangled state from joint measurements of the frequencies and arrival times of the photons, and the correlations between them. Our reconstruction method is based on a modified Gerchberg-Saxtonalgorithm. Such techniques are essential to measure and control the shape of ultrafast entangled photon pulses.

The paper Ultrafast quantum interferometry with energy-time entangled photons by JP MacLean, John Donohue, and Kevin Resch was published in Physical Review A.

Abstract: Many quantum advantages in metrology and communication arise from interferometric phenomena. Such phenomena can occur on ultrafast timescales, particularly when energy-time entangled photons are employed. These have been relatively unexplored as their observation necessitates time resolution much shorter than conventional photon counters. Integrating nonlinear optical gating with conventional photon counters can overcome this limitation and enable subpicosecond time resolution. Here, using this technique and a Franson interferometer, we demonstrate high-visibility quantum interference with two entangled photons, where the one- and two-photon coherence times are both subpicosecond. We directly observe the spectral and temporal interference patterns, measure a visibility in the two-photon coincidence rate of (85.3±0.4)%, and report a Clauser–Horne–Shimony–Holt–Bell parameter of 2.42±0.02, violating the local-hidden variable bound by 21 standard deviations. The demonstration of energy-time entanglement with ultrafast interferometry provides opportunities for examining and exploiting entanglement in previously inaccessible regimes.

One of the images was selected for Kaleidoscope. Thanks Blender!

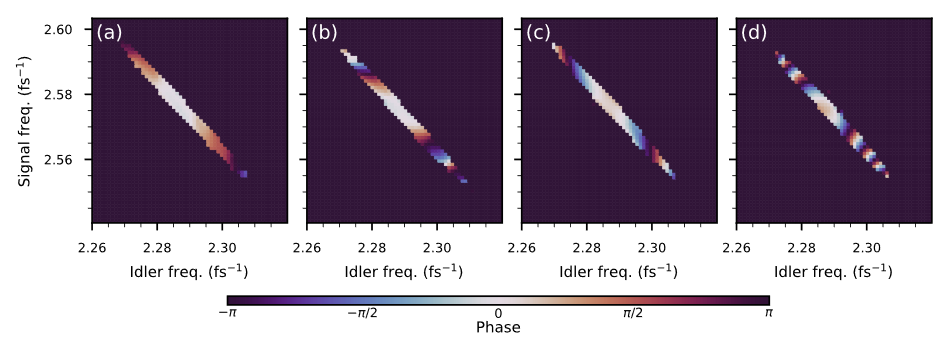

We have a new paper, Direct Characterization of Ultrafast Energy-Time Entangled Photon Pairs, by JP MacLean, John Donohue, and Kevin Resch that just appeared in Physical Review Letters. It was selected as an Editors’ Suggestion and Featured in a Synopsis in Physics.

Abstract: Energy-time entangled photons are critical in many quantum optical phenomena and have emerged as important elements in quantum information protocols. Entanglement in this degree of freedom often manifests itself on ultrafast time scales, making it very difficult to detect, whether one employs direct or interferometric techniques, as photon-counting detectors have insufficient time resolution. Here, we implement ultrafast photon counters based on nonlinear interactions and strong femtosecond laser pulses to probe energy-time entanglement in this important regime. Using this technique and single-photon spectrometers, we characterize all the spectral and temporal correlations of two entangled photons with femtosecond resolution. This enables the witnessing of energy-time entanglement using uncertainty relations and the direct observation of nonlocal dispersion cancellation on ultrafast time scales. These techniques are essential to understand and control the energy-time degree of freedom of light for ultrafast quantum optics.

A collaborative work led by Thomas Jennewein’s group with our group, both at IQC and the Department of Physics & Astronomy, Gregor Weihs’ group from the University of Innsbruck, and Deny Hamel from Universite Moncton has been chosen as a Physics World Top 10 Breakthrough for 2017. The original paper was published in Physical Review Letters:

Observation of Genuine Three-Photon Interference. Sascha Agne, Thomas Kauten, Jeongwan Jin, Evan Meyer-Scott, Jeff Z. Salvail, Deny R. Hamel, Kevin J. Resch, Gregor Weihs, and Thomas Jennewein, Phys. Rev. Lett. 118, 153602 (2017).

The citation from Physics World reads:

To Sascha Agne and Thomas Jennewein of the University of Waterloo and colleagues and Stefanie Barz, Steve Kolthammer and Ian Walmsley of the University of Oxford and colleagues for independently measuring quantum interference involving three photons. Seeing the effect is very difficult because it requires the ability to deliver three indistinguishable photons to the same place at the same time and also to ensure that single-photon and two-photon interference effects are eliminated from the measurements. As well as providing deep insights into the fundamentals of quantum mechanics, three-photon interference could also be used in quantum cryptography and quantum simulators.

Kent Fisher was awarded the WB Pearson medal for his outstanding PhD thesis, Photons & Phonons: A room-temperature diamond quantum memory. Congratulations Kent!

Also, welcome to Patrick who is (re)joining the group as an MSc candidate and welcome to Austin who is joining us for the summer after USEQIP!

About the Pearson award: This medal was created to honour Professor W.B. Pearson in recognition of his contribution to the University of Waterloo and to Canada as a research scientist and teacher. One medal will normally be awarded annually to a Doctoral student from each department in the Faculty of Science at the discretion of the department concerned in recognition of creative research as presented in the student’s thesis.

Photo: Kent Fisher and Dean of Science Bob Lemieux at the Science Award Ceremony held in conjunction with Convocation 2017.

Our paper, Quantum-coherent mixtures of causal relations, by JP MacLean, Katja Ried, Rob Spekkens and Kevin Resch was published today in Nature Communications. This work was the result of a collaboration between the Quantum Optics and Quantum Information group at the University of Waterloo (IQC/Physics) and the Perimeter Institute.

Abstract: Understanding the causal influences that hold among parts of a system is critical both to explaining that system’s natural behaviour and to controlling it through targeted interventions. In a quantum world, understanding causal relations is equally important, but the set of possibilities is far richer. The two basic ways in which a pair of time-ordered quantum systems may be causally related are by a cause-effect mechanism or by a common cause acting on both. Here we show a coherent mixture of these two possibilities. We realize this nonclassical causal relation in a quantum optics experiment and derive a set of criteria for witnessing the coherence based on a quantum version of Berkson’s effect, whereby two independent causes can become correlated on observation of their common effect. The interplay of causality and quantum theory lies at the heart of challenging foundational puzzles, including Bell’s theorem and the search for quantum gravity.

|

|